NOTE: Since April 2020, we have been offering every one of our presentations and trainings in virtual modalities (e.g., Zoom, WebEx, Teams, Hopin, Skype). Reach out if you need specifics, as we’ve optimized the way we engage with our audiences from afar!

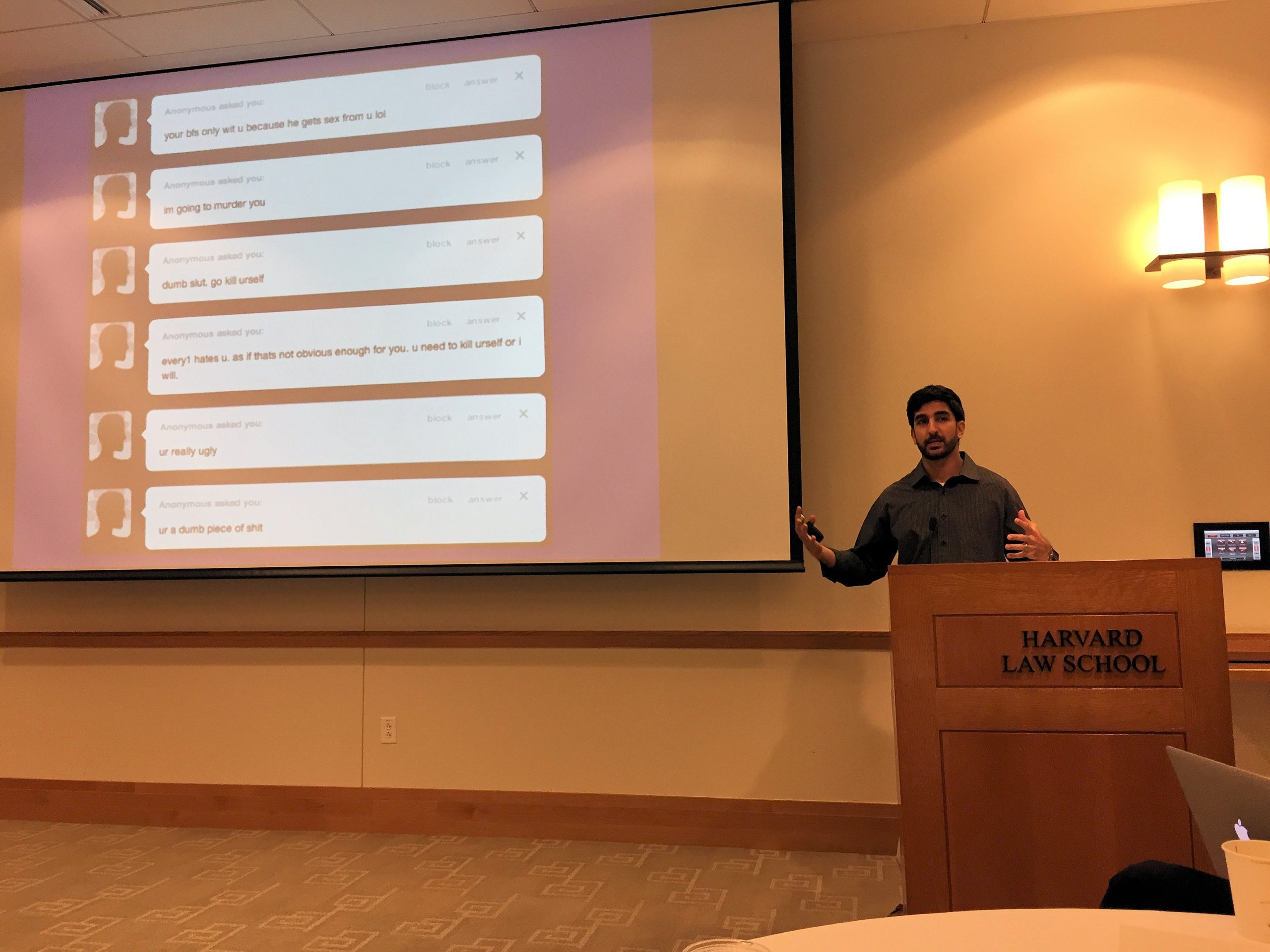

Our professional lives focus on promoting civility and preventing toxicity online, especially among youth. By intersecting social science with computer science, we have been able to make strides in this area. With regard to artificial intelligence (AI), the overarching goal is to preempt victimization by:

- identifying (and blocking, banning, or quarantining) the most problematic users and accounts

- immediately collapsing or deleting content that algorithms predictively flag and label as abusive

- promoting, elevating, or otherwise incentivizing civility and respect

- otherwise controlling the posting, sharing, or sending or messages that violate appropriate standards of behavior online.

Since most all of us are on social media, we’ve witnessed (and perhaps even experienced) the haters, harassers, and trolls. It’s deeply upsetting, but progress is being made. We will explore the types of behaviors we’re trying to eliminate, and the ways we’re seeking to enhance the mental health and well-being of all users through AI. We’ll also discuss the challenges we face, and why this is an imperfect science. Ultimately, we want everyone to have positive experiences online, rather than being silenced, harassed, or otherwise victimized. AI can help, but it’s going to take some time.

(60 minutes)

Here are numerous testimonials from schools and other organizations with whom we have worked.

Contact us today to discuss how we can work together!